Photogrammetry and augmented reality on mobile: A comparison of AR solutions

Feb 07, 2023 • 13 min read

There has been a noticeable surge in interest in augmented reality (AR) in recent years, as demonstrated by the release of new updates from major technology companies such as Apple and Google. With that in mind, the mobile development team at Grid Dynamics conducted some additional research into AR. We wanted a hands-on understanding of what is currently available and what technologies our clients can utilize to expand their businesses. To do this, we constructed a general overview and then decided to delve a little deeper into the subject.

It’s important for companies in all industries to stay on top of the latest technology trends to expand their business reach. Augmented reality is one of the most promising technology trends to achieve this goal. However, AR and photogrammetry are relatively new technologies, with very few solutions currently available on the market. Furthermore, some of the solutions have more or better capabilities than others, making it essential for companies looking to implement AR as part of their business strategy to research what is available on the market, evaluate the quality of the solutions, or even develop their own.

With our retail and digital commerce customers in mind, we chose a task that would be useful in their specific businesses. Thus, we created a solution and proof-of-concept (PoC) application for a hypothetical app called “Scan and Show.” The app scans an object and displays it in AR mode. This enables customers to view items in 3D, try on items, or see how they would fit in an interior space. Additionally, there are practical applications for 3D scanning items in shops and adding them to AR showcases.

The premise

Our hypothetical app “Scan and Show” can scan objects in video or photo formats, using cloud storage for raw images and final 3D models. This app also includes a photogrammetry service to convert images into 3D models and render apps for displaying 3D models in AR mode.

The scanner

There are several ways to render 3D objects from real-world images, including digital single-lens reflex (DSLR) cameras, drones, 3D scanners, and mobile phones. Each method has its advantages. For example, DSLR cameras are suitable for capturing detailed textures and providing high image resolution, while drones are good for scanning large open areas and objects like buildings. 3D scanners excel at capturing the shapes of objects, and mobile phones are a convenient option because they are always readily available, and can provide high-quality image resolution and depth maps. On average, mobile phones may be the most practical choice for scanning objects in 3D. Let’s explore whether this is feasible in practice. There are two approaches to scanning objects with a mobile device: recording a video or taking a photo.

Recording a video is more user-friendly because it provides a smooth process with many frames. However, since most photogrammetry tools require photo input, we would need to convert the video into a series of photos to use it. We did it with ffmpeg:

ffmpeg -i input.mp4 -vf "fps=${2-'1'}, scale=2160:-1" out4K/img%03d.jpg

After conducting a few tests, we decided that there are better approaches than video. Not all phones can capture video at 4K resolution yet; most are limited to Full HD resolution. For photogrammetry, a higher resolution is generally preferred. Even basic phones can take photos with a resolution of 12MP, which is higher than 4K video. Another critical factor to consider is depth maps. While phone cameras can capture images with depth map data, this is not an option for video. Depth maps can be handy for some photogrammetry software and dramatically improve the final result’s quality.

Depth map

A depth map is the additional data contained within a photo file that helps distinguish what is in the foreground from what is in the background. It can be rather useful for certain apps, such as creating a portrait or parallax effects on social media. Modern phones can generate depth map data from LiDAR (which provides the best result), dual camera setups, or by taking two pictures with a single camera and using intelligent algorithms. You can find more info about depth maps here.

For our research purposes, the best option was to take photos. To make the process more convenient, we added features such as an auto timer and a photo counter to help users take a sufficient number of acceptable-quality photos. This is just one example of how the image-capturing process can be improved. There are many other possibilities, such as adding an AR dome. But a photo app is not the main focus of our scanning solution, the photogrammetry service is. Let’s turn our attention to it.

The photogrammetry service

The critical component of our research was photogrammetry. To start things off, we established the key platforms that can provide the tools for this research project: ArKit, Meshroom, PhotoModeler, MicMac, and 3DF Zephir.

Of course, they’re not the only options available. But for the purpose of our test, we’ll be focusing on these specific ones.

Case #1

- 60 photos

- No special lighting

- No turntable and background

We got these images from our scan app without making any changes to them.

Case #2

- 119 photos

- Even lighting

- Turntable

- White background

- Several positions of the object

This is an image sample featuring additional tools like a turntable and professional lighting.

Your best bet is to try different photogrammetry software and services to process these images and generate 3D models. Let’s compare the results to determine which service produces the most accurate and detailed 3D models.

Meshroom

Meshroom is a framework built on top of AliceVision.

One of the benefits of Meshroom is that it is free, open-source, and available on multiple operating systems such as Linux, Mac, and Windows. It also offers visual tools and a command-line interface for users to choose from.

| Pros | Cons |

| Free | Requires an NVIDIA GPU card with CUDA |

| Open-source | Output quality isn’t great |

| Supported on Linux, Mac, and Windows | |

| Has a visual tool and a CLI |

The framework produces great results after some fine-tuning; however, our goal was to compare the immediate image results.

The juice box model quality is rather low. The shoe model quality is average, and it requires additional work in a 3D editor.

3DF Zephyr

3DF zephyr is another photogrammetry software that has a visual editor, terminal application, and container image, not to mention a free tier option. It comes as an already robust and scalable solution that nearly meets current industry standards. However, considering that our goal is to compare different solutions, let’s move on to analyzing the results.

| Pros | Cons |

| There is a Linux container for AWS (EC2 or Fargate) | Visual Editor is a Windows-app |

| Has free tier (only up to 50 photos per object, or 1 euro licence for non-commercial projects) | Out-of-the-box image quality isn’t perfect |

| Direct export to GLB and USDZ formats | |

| Fargate is much cheaper than EC2 Mac instances (required for Apple’s solution), and much better for scalability |

The juice box model quality is again relatively low. The shoe model quality is good, but it requires some extra work on the details, such as the removal of the remaining parts of the turntable.

ARKit and RealityKit object capture

With the solution from Apple, we used a command-line app that works with RealityKit Object Capture API to transform images into a 3D model.

| Pros | Cons |

| Simpliest way to try photogrammetry | Requires powerful MacOS machine with 4GB GPUs or Apple Silicon CPUs |

| Best result out-of-the-box | Output format is USDZ* |

| Supports images with Depth Map |

If you want to build a fully-functional and scalable cloud solution, you should keep in mind that platforms like Google Cloud do not currently have Mac OS support. Currently, the best alternative is to host it on Amazon EC2, but it can be be costly.

These are the images without depth data:

With depth data:

The juice box model quality is low if we don’t provide the depth data. Surprisingly, the shoe model quality is superb, even without the depth data. From this, we can conclude that depth data can dramatically change performance with low-quality image sources. For high-quality source photos, extra depth data is unnecessary.

Photomodeler and MicMac

Despite the effectiveness of Photomodeler and micmac, we’ve exlcuded them from our research, as they do not fully meet predefined requirements and expectations.

PhotoModeler primarily captures real-world objects and scenes with markers, makes measurements, and helps in manufacturing processes. There is no straightforward method of obtaining a 3D model from a set of photos. So, it is not suitable for our case. MicMac is a specialized software that requires a specific setup for certain cases, and it doesn’t appear to be a versatile and user-friendly solution for our needs.

Cloud storage for raw images

The primary objective of this research and article is not to create a fully production-ready app, but to learn best practices for doing so in the future. Therefore, the cloud storage provider wasn’t a crucial factor for us. We chose Firebase because it was deemed the simplest, most cost-effective and fastest integration solution. It may not be the best for your final product, but for theoretical purposes, it suffices.

Using the photo scanner app is pretty straightforward. First, we created a bucket with a similar name to the scanned object’s name. Next, the app uploads all captured photos to the bucket, and that’s all there is to it.

Since the scanner app is a native app for iOS and Android, we can use the native SDKs (iOS, Android) for interaction with Firestore.

In the next step, the photogrammetry app downloads images for processing. Downloading images from the cloud is made easy with gsutil.

gsutil cp -rgs://{bucketName}/${objectName} input

3D model types

The Render App is the last part of our “common solution” diagram, but let’s discuss the 3D model formats suitable for different platforms before we jump into the photogrammetry processing solution.

OBJ

An OBJ (current version 3.0) file contains information about the geometry of 3D objects. The files are used for exchanging information, CAD, and 3D printing. OBJ files can support unlimited colors, and one can define multiple objects. The objects in an OBJ file are defined by polygon faces (defined by vertexes or points), curves, texture maps, and surfaces. OBJ is a vector file, which makes the defined objects scalable. There’s no maximum file size. The format was created in the 1980s by Wavefront Technologies for its Advanced Visualizer animation software (Autodesk Maya has since replaced Advanced Visualizer). Many companies have adopted the OBJ format for use in their applications.

GLB and GLTF

From Marxentlabs about GLB:

GLB is a 3D file format that’s used in Virtual Reality (VR), Augmented Reality (AR), games, and web applications because it supports motion and animation. Another advantage of the format is its small size and fast load times. GLB files are a binary version of the GL Transmission Format (gLTF).file which uses JSON (JavaScript Object Notation) encoding. So, supporting data (like textures, shaders, and geometry/animation) is contained in a single file.

The Khronos Group developed the GLB and glTF formats in 2015. They saw a need for formats where developers could open and edit in many graphics and 3D apps. Version 2.0 of the specification (released in 2017), added Physically Based Rendering (PBR), which allows shadows and light to appear more realistic and coding updates for speed and improvements in animation.

GLB, like glTF, is a free way to encode 3D data. A GLB file will generally be smaller (approximately 33 percent) than a glTF file and its supporting files. It’s also easier to upload a single file to a server rather than two or three, so GLB has an advantage there too. GLB files can be compressed by Draco library.

USDZ

What Marxentlabs says about USDZ:

USDZ is a 3D file format that displays 3D and AR content on iOS devices without downloading special apps. Users can easily share this portable format, and developers can exchange it between applications in their 3D-creation pipeline. The USDZ format lets iOS 12 iPhone and iPad users view and share AR files. USDZ offers portability and native support, so users do not need to download a new app for each viewing situation. Users can easily share USDZ files with other iOS users. Currently, Android does not support USDZ and has its equivalents, gLTF and gLB.

Essentially, glTF is a standard format for AR 3D files. GLB is similar but in binary format, while USDZ is Apple’s proprietary format for the same purpose. OBJ is an older format unsuitable for modern 3D engines, but it can serve as a bridge between USDZ and GLB.

Photogrammetry processing

After conducting a preliminary review of various photogrammetry tools, we focused on a solution from Apple, which stood out because it was free, provided the best out-of-the-box results, and was the easiest to use (just a console app that takes images as input, and outputs a 3D image as a result).

As mentioned before, the best formats for Android devices are gLTF and gLB, but unfortunately, the solution from Apple doesn’t provide these formats. The only format available is USDZ, which is not a surprise. To overcome this limitation, we added a small modification to the code, so we can now export 3D models in OBJ format and convert them to any other format.

let objPath = "output/out.obj"

let modelAsset = MDLAsset(url: url)

modelAsset.loadTextures()

do {

try modelAsset.export(to:URL(fileURLWithPath: objPath))

print("Exported to OBJ!")

}

That’s it, now we have both OBJ and USDZ formats.

The next step is to convert OBJ to either gLTF or gLB. For that, we used the obj2gltf tool.

npm install -g obj2gltf

obj2gltf -i model.obj -o model.gltf

obj2gltf -i model.obj -o model.glb

Cloud storage for 3D models

In this case, we have a similar situation with storing raw images: we don’t need to go for something extraordinary so we stuck with a standard storage option.

We are already familiar with gsutil, and it can also handle file uploads:

gsutil cp {objectName}.gltf gs://{bucketName}

Operating systems and libraries

Android

We tested out a few different solutions on Android:

Scene Viewer (Android built-in viewer)

The easiest way to display any 3D object in AR on an Android is to use a built-in viewer, which has always been available right in your pocket on your mobile device. You can try it out directly from Google Search results. For example:

- Search for “dog”;

- Look for “View in 3D” in the search results; and

- Try viewing a Labrador Retriever to see if it would fit in your apartment.

This article describes how we can use the build-it view with custom objects. In a nutshell, you can integrate it into your website or call it from an Android app using an external command.

HTML:

<a href="intent://arvr.google.com/scene-viewer/1.0?file=https://raw.githubusercontent.com/KhronosGroup/glTF-Sample-Models/master/2.0/Avocado/glTF/Avocado.gltf#Intent;scheme=https;package=com.google.android.googlequicksearchbox;action=android.intent.action.VIEW;S.browser_fallback_url=https://developers.google.com/ar;end;">Avocado

Java:

Intent sceneViewerIntent = new Intent(Intent.ACTION_VIEW);

sceneViewerIntent.setData(Uri.parse("https://arvr.google.com/scene-viewer/1.0?file=https://raw.githubusercontent.com/KhronosGroup/glTF-Sample-Models/master/2.0/Avocado/glTF/Avocado.gltf"));

sceneViewerIntent.setPackage("com.google.android.googlequicksearchbox");

startActivity(sceneViewerIntent);

Simple and fast. However, our goal is to try out as many different tools as possible. So, let’s look at a few other options:

Sceneform

Sceneform was a library that you could find in early Google workshops and articles.

However, it has since been archived and is no longer maintained by Google. As a result, many of the old samples are no longer useful.

There are some mainted versions of this library still available online, such as the following link.

In our experiments, we encountered a few issues when working with Sceneform, such as the need for more support for animation and limitations on file size. Therefore, we decided to explore other options to find something more suitable.

Sceneview

This is a technology that has been developed to replace Sceneform. It’s important not to confuse it with Scene Viewer. The similar-sounding names can be confusing, but this technology uses the Kotlin programming language and has some distinct advantages.

This project is well-maintained and during our experiment it demonstrated good performance with GLTFs of various sizes, whether they contained animations or not.

Additionally, it performed well when loading 3D models directly from the cloud.

So, for us, it served as a valuable testing ground.

ARCore

All the libraries described above use Arcore to configure AR objects in the real world.

It supports a wide range of platforms, including native Android and iOS, as well as popular 3D game engines like Unity and unreal. For our specific needs, the best way to interact with Arcore was through Sceneview on the Android side, while on the IOS side, another native solution was a better fit. However, having a joint code base or shared resources may be desirable, in which case, using a cross-platform solution such as Unity or Unreal engine is an excellent option to consider.

Render app for iOS

SceneKit

Apple’s iOS platform offers a smooth, high-quality solution as standard. In this case, that solution is ArKit, a framework developed by Apple to implement augmented reality. ArKit utilizes USDZ models and uses SceneKit as the render engine to place them in the real world.

SceneKit enables developers to create 3D scenes and animations, and add 3D objects to existing iOS and macOS apps. It provides a simple, high-level API for creating 3D scenes and rendering them on screen. SceneKit automatically handles many low-level tasks associated with 3D rendering, such as creating and managing OpenGL contexts, working memory, and rendering the scene.

Unity and Unreal

There are also a few cross-platform solutions. For example, Arcore, as mentioned earlier, has plugins for the Unity and unreal game engines. Similarly, ArKit has plugins for these popular 3D engines. So, if you don’t have experience with native Android or iOS development but are interested in experimenting with augmented reality, these game engines can be a great alternative.

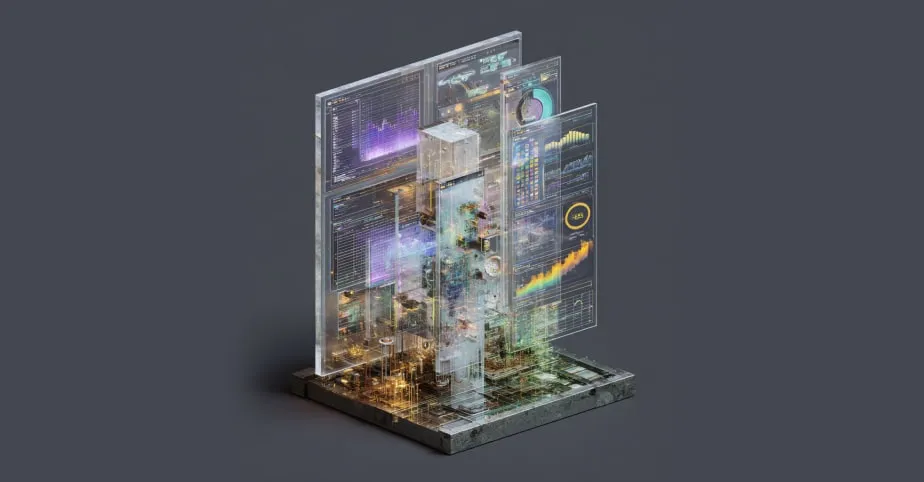

Results

Here’s what we found at the end of our journey:

This scheme represents our final solution.

We have two native software scanners, one for iOS and another for Android. These scanners capture photos of the scanned object and store them in a Firebase Cloud Storage bucket. Once the photos are saved, a notification is sent to our on-premises Mac OS laptop, which is a cost-effective alternative to expensive cloud computation instances with Mac OS. This laptop runs a bash script that carries out the photogrammetry task, converts photos into the appropriate 3D formats, and uploads the 3D files back to the cloud bucket. Additionally, we have created two native mobile apps, known as Render apps, which can read 3D objects directly from the cloud and render them using AR technology.

It was developed at a bench scale, but it is a fully functional system that can scan an object using photogrammetry and overlay it onto the real world using AR technology. It has served as our playground for experimenting with different tools and platforms. Additionally, it has given us insight into how AR and photogrammetry can be applied in real-world projects. Now we better understand what to expect and what we can offer to our clients.

Some of the results can be viewed in the video clip below:

View the video here.

So, is there a one-size-fits-all solution when it comes to harnessing AR technology? The answer is no. This is simply one way of doing things, which has allowed us to learn some best practices and insights for future projects. We will still experiment with other solutions like 3Dflow, or even develop our own as AR technology becomes ever-more popular.

Building an AR app for mobile: Conclusions

Our experiments have demonstrated that current AR technologies are ready for production. While some perform well out of the box, others may require additional fine-tuning. However, it’s already possible to create functional solutions with these technologies. Our research also showed numerous potential applications for photogrammetry and augmented reality. These technologies are admittedly still in their early stages, but we are already seeing the beginnings of their rise in popularity. By guiding these technologies in the right direction, you can bring a brand new experience to your users and significantly impact the way customers view your products, and the industry as a whole.

One of the best tools for these technologies is mobile devices because they’re always on hand and allow you to quickly render 3D models of real-world images, or incorporate virtual objects into the real world. For example, you can fill a virtual shop window with product offerings, try on shoes virtually, or arrange furniture in an apartment. At this current stage, we can only imagine some of the ways these technologies will be used in the future, but there will undoubtedly be many more innovative uses to come.

References

Augmented reality: the future is now

Everything You Need to Know About USDZ Files

Everything You Need to Know About Using GLB Files

Using Scene Viewer to display interactive 3D models in AR from an Android app or browser

Creating a Photogrammetry Command-Line App

Tags

You might also like

User interfaces are no longer static. The industry is shifting toward adaptive systems where the interface is assembled at runtime. For decades, software was designed around fixed surfaces: a nav here, a hero there, content slots predefined by a designer. Users learned the interface. However, th...

What does AI-powered modernization as a daily operating model look like? On Monday morning, your teams do not start by opening an incident queue. They start by reviewing a set of pull requests produced overnight by software agents focused on modernization. Each pull request is small. Each is tested...

As of February 2026, the European Union Artificial Intelligence Act (AI Act) has transitioned from a legislative draft to the primary regulatory framework for software engineering in the EU. This landmark legislation is no longer a distant prospect; with prohibitions on unacceptable risks already i...

Enterprise AI agents are increasingly used to assist users across applications, from booking flights to managing approvals and generating dashboards. An AI agent for UI design takes this further by generating interactive layouts, forms, and controls that users can click and submit, instead of just...

Today, agentic AI can autonomously build, test, and deploy full-stack application components, unlocking new levels of speed and intelligence in SDLC automation. A recent study found that 60% of DevOps teams leveraging AI report productivity gains, 47% see cost savings, and 42% note improvements in...

Today, many organizations find themselves grappling with the developer productivity paradox. Research shows that software developers lose more than a full day of productive work every week to systemic inefficiencies, potentially costing organizations with 500 developers an estimated $6.9 million an...

Fortune 1000 enterprises are at a critical inflection point. Competitors adopting AI software development are accelerating time-to-market, reducing costs, and delivering innovation at unprecedented speed. The question isn’t if you should adopt AI-powered development, it’s how quickly and effectivel...